Tutorial on how to add a custom robots.txt file in blogger/blogspot blog.

Maybe you’ve heard about the term robots.txt. What is robots.txt? is it necessary in the settings? what if I leave it alone? There are probably many other questions you have, especially if you are newbie blogger.

To better understand the meaning of robots.txt, to make it more understandable, I have made this tutorial.

If you want to get your blog indexed and crawl your pages fast then you should add this custom robots.txt file in your blogger blog. as well as it’s a part of search engine optimization so you must be aware of the terms.

Recommended: SEO tricks and tips for blogs

How It Works?

Robots.txt is a command for the search engine robots to explore or browse a pages of our blog. Robots.txt is arguably filter our blog from search engines.

Let’s say robot wants to visits a Webpage URL, example, http://www.example.com/about.html. Before it does so, it will check for http://www.example.com /robots.txt, and then it will access the particular webpage.

All the blogs already have a robots.txt file given by blogger/blogspot. By default robots.txt on blogs like this:

Disallow:

User-agent: *

Disallow: /search

Allow: /

Sitemap: http://yourblogname.com/atom.xml?redirect=false&start-index=1&max-results=500

What Is The Meaning Of Above Codes?

User-agent :Mediapartners-Google

This command tells your blog to allow Adsense bots to crawl your blog. If you’re not using Google Adsense on your blog then simply remove this line.

Disallow :

This cammand prevents Search Engine bots from crawling pages on your blog.

User-agent:*

All Robot Search Engines/Search engine

Disallow:/search

Not allowed tocrawl thesearchfolder,like./search/labeland. search/search? Updated.. here lable is not inserted to search because label is not a URL who estate toward sone specific page.

Example :

Allow: /

This command tells allow all pages to be crawled,except that written on Disallow above. Mark(/)or less.

Sitemap:

Sitemap: http://yourblogname.com/atom.xml?redirect=false&start-index=1&max-results=500

How To Prevent Robot On Certain Pages?

To prevent particular page from Google crawling just disallow this page using Disallow command. For example :if I don’t want to index my about me page in search engines. simply I will paste the code Disallow: /p/about-me.html right after Disallow: /search.

Code will look like this

Disallow:

User-agent: *

Disallow: /search

Disallow: /p/about-me.html

Allow: /

Sitemap: http://yourblogname.com/atom.xml?redirect=false&start-index=1&max-results=500

How To Add Custom Robots.txt File In Blogger

Note: Before adding custom robots.txt file in blogger you should keep one thing in your mind that if you are using robots.txt file incorrectly then your entire blog being ignored by search engines.

Step1

Go to your Blogger Dashboard

Step2

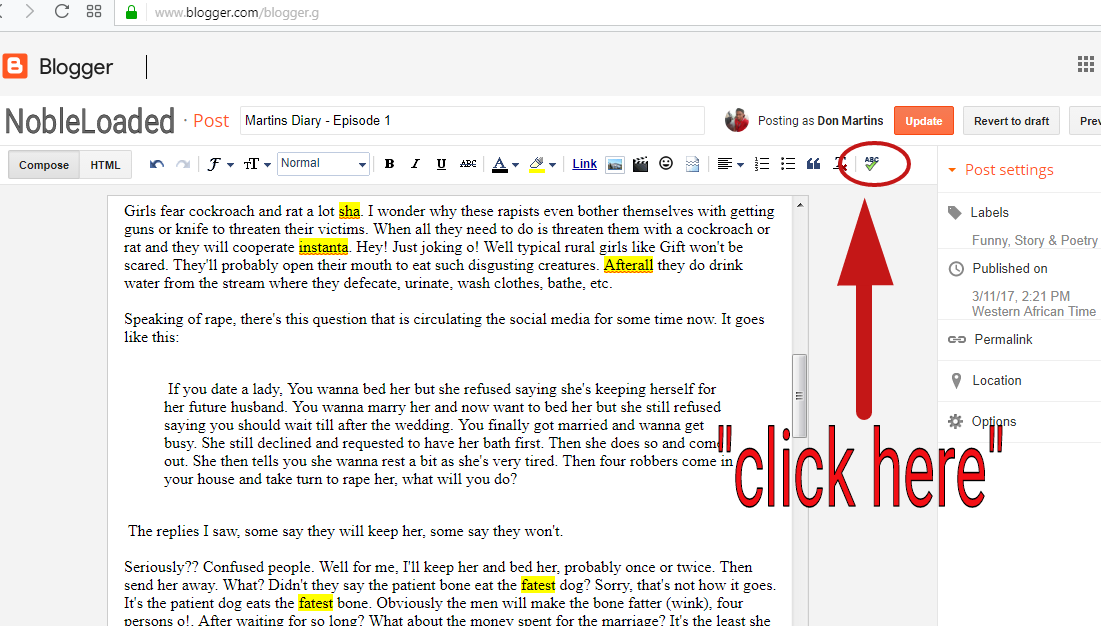

Now go to Settings>> Search Preferences>>Crawlers and indexing>>Custom robots.txt>> Edit >> Yes

Step3

Now paste your custom robots.txt file code in the box.

Step4

Now click on Save Changes button.

Step5

That’s all, You are done!

Hope this little tutur helped.

If you still have any quires regarding this article then please let me know, You can leave your question in comment box.

Cool… So how do u get d custom txt

I dont understand you, could you please explain what you mean, Rose

Thanks for sharing information about robot.txt. Web design Mississauga

This post gave me alot of insight about robot.txt. Thanks for sharing

Thanks for sharing information

วิเคราะห์บอล

วิธีเล่น บาคาร่า

nice